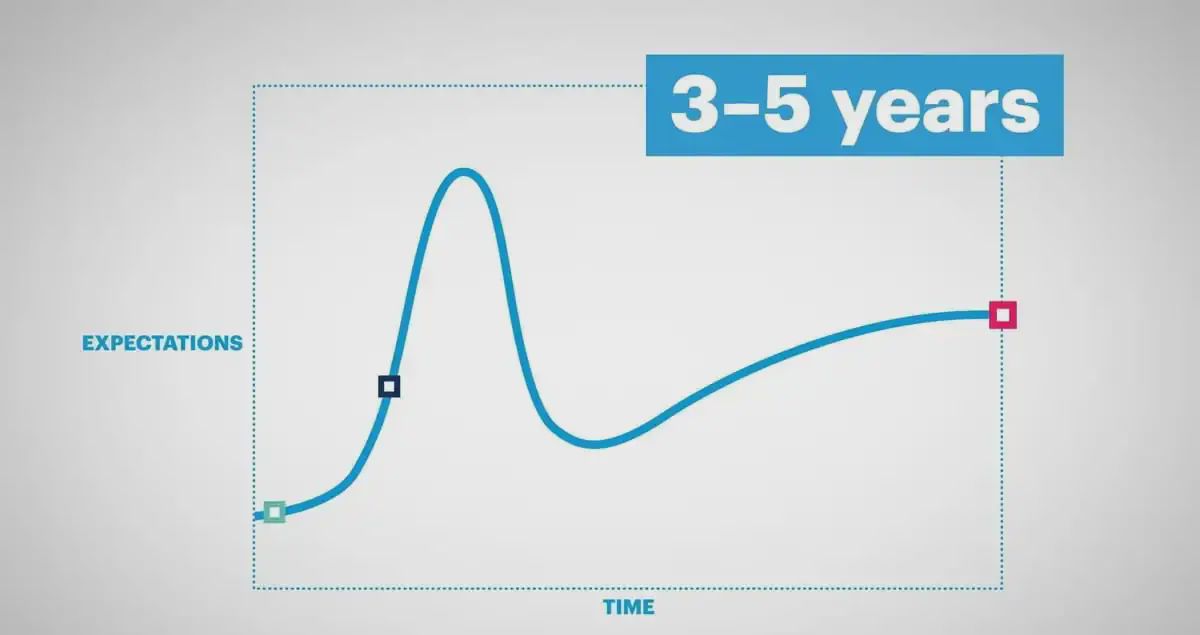

Generative AI Hype Cycle is Hitting the ‘Trough of Disillusionment’ [according to technology research and consulting firm Gartner, which has popularized the concept of the 'new technology hype cycle']

(www.404media.co)

from theangriestbird@beehaw.org to technology@beehaw.org on 18 Jul 2024 17:35

https://beehaw.org/post/15061119

from theangriestbird@beehaw.org to technology@beehaw.org on 18 Jul 2024 17:35

https://beehaw.org/post/15061119

threaded - newest

Good. I’ve been aware of LLMs for about five years but I’ve been saying since the hype started that it can’t do what people are expecting/afraid it will do. It’s about time for sanity to prevail.

Same. I support AI completely as a tool to solve specific problems and that is about it. What is really cool is that AI libraries and such got a massive boost of needed development so plebs like me can code simple ANN apps in Python with little skill. Documentation has improved 100x and hardware support is fairly good.

LinkedIn seems to be an interesting indicator of where tech is in its hype cycle. I guess LinkedIn went from 100% AI-awesome-everything about 2 months ago to almost zero posts and ads about it. I suppose most of the vaporware AI products are imploding now…

Of course, algorithmic feeds are a thing, so your experience might be different.

It does seem to be the case for me - I’ve seen far less overexcited comments, and far more questioning its present and future abilities to perform.

I think that at the bottom of the trough people will stop caring about it altogether except for functionality that has been proven to be useful for - such as a translation aid, coding aid, or to quickly pull off multiple images if you don’t mind overall quality.

I just generated a fresh example highlighting it. I’ll put it inside spoilers to avoid clutter:

German and English, ChatGPT 3.5

*

[Input 1]translate the following German sentence into English: “der Hund ist schlecht”. *[Output1]The dog is bad. *[Input 2]Which word is being translated as “bad”? *[Output 2]The word “schlecht” is being translated as “bad” in this context. *[Input 3]What are other meanings or connotations of the word “schlecht”? *[Output 3]In addition to “bad,” the word “schlecht” in German can also mean “poor,” “inferior,” “evil,” or “unwell,” depending on the context in which it is used. *[Input 4]Now, translate the sentence “the bad dog is sleeping” into German. *[Output 4]“Der schlechte Hund schläft.” *[Input 5]Why is it “schlechte” in this sentence, instead of “schlecht” as in the earlier sentence? *[Output 5]In German, adjectives change their form based on the gender, number, and case of the noun they are describing. In this case, “Hund” is a masculine noun in the nominative case, so the adjective “bad” (schlecht) needs to take the masculine nominative form, which is “schlechte.” It’s working rather well as a translation aid as long as you know the language, as it could help you to phrase a sentence. However, once you ask for specific info - as I did in input #5 - it drops the ball; in this case outputting technically correct but misleading and incomplete info: * The form also depends on the article. For example, if I were to use “ein” (a) instead of “der” (the), the correct form would be “schlechter” * The way that the output is phrased, it implies that the “schlecht” in the first sentence is not describing a masculine noun in the nominative. It is - and the actual reason why it takes the form “schlecht” is not mentioned in the output (predicatives take the base form). [und nebenbei: alle Hunde sind gut.]In the “overexcitement peak”, I think that a lot of people were focusing on the correct output, and assuming that the rest would be solved “with enough elbow grease”, playing whack-a-mole with hallucinations. Now they’re noticing that it isn’t.

You’re describing the “plateau of productivity”, which is built into this theory:

and I agree with you! it’s clear that there are good use cases for AI (see this article i posted in Science), we just need to apply it correctly and not overpromise!

Not quite - the plateau represents all useful ways that the tech can be used; what I’m describing is not even a fraction of that, it’s only the stuff that is too obvious to pass.

Thank you for the link - I’m going to give it a check now!

I end up having to play twenty questions with chatgpt. For example, I’ve been asking it for code examples for ffmpeg mpeg4 encoding with C++.

It will happily spit out completely non-working code, where the core part - feeding image frames to the encoder - works, but it doesn’t initialise or tidy up the encoding afterwards.

Until I say, “hey this code doesn’t seem to work and creates corrupted files”, and then it’s like, “oh yeah you also need to do a bunch of other stuff, just like this”. Repeat as it slowly adds more and more pieces until finally you end up with something that actually works.

Or it will happily dream up function names or mix python and C functions, or will refer to older APIs even when I’ve specifically said “use API version x.y” and so on and so forth.

If I didn’t know enough about the subject already, I’d never be able to tease out the answer. So in a sense it’s a mostly useful reference, but it can’t be relied on to actually and consistently provide a result because it’s all statistics and fuzzy text generation behind the scenes, not actual knowledge.

I remember I was using it and I was so frustrated with a piece of code because it was undocumented and so I decided to ask chat gpt. It said to use this python method that I didn’t know existed and I was so happy because it solved my exact problem. Of course it turned out that that method didn’t exist at all and I had just completely made it up. Which great probability says that there should be a method that does this, but they’re obviously isn’t. So why would you recommend it?

I find interesting that you’re using the bot for something else (coding aid) than me (translation aid), and yet the conclusion is the same - you need previous knowledge of what you’re asking to sort out the bullshit being generated by a system that doesn’t really know anything.

To be honest, I was surprised it had any idea about FFMPEG. The biggest problem is that it sounds so authoritative.

If it said, “hey I don’t know a huge amount about X” then you could work with that. But it will blithely say “no problem” and spit out 6 pages of non working code that you then have to debug further, and if you don’t know the terms in the area you’re working in you end up blundering around trying to find the right trigger word to get what you want.

I’ve been tasked with some notion testing and research this week. I tried asking chat gpt some simple questions, and it kept feeding me this exact scenario over and over. I guess notion doesn’t like any kind of recursion. Or at least not without extensions being added.

When people constantly lie and grift, it’s normal and healthy to become disillusioned.

But I thought

blockchainAI was going to change everything!