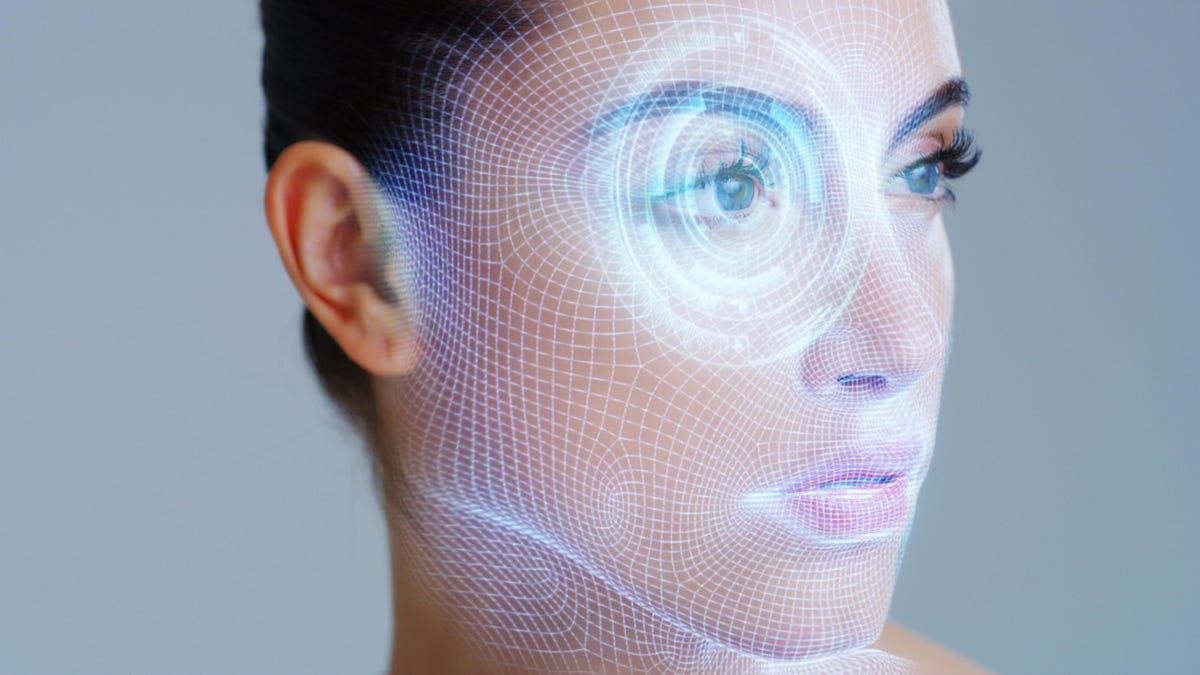

The AI Deepfakes Problem Is Going to Get Unstoppably Worse

(gizmodo.com)

from L4s@lemmy.world to technology@lemmy.world on 09 Feb 2024 22:00

https://lemmy.world/post/11768114

from L4s@lemmy.world to technology@lemmy.world on 09 Feb 2024 22:00

https://lemmy.world/post/11768114

The AI Deepfakes Problem Is Going to Get Unstoppably Worse::Deepfakes are blurring the lines of reality more than ever before, and they’re likely going to get a lot worse this year.

threaded - newest

This is the best summary I could come up with:

The world is being ripped apart by AI-generated deepfakes, and the latest half-assed attempts to stop them aren’t doing a thing.

Federal regulators outlawed deepfake robocalls on Thursday, like the ones impersonating President Biden in New Hampshire’s primary election.

“They’re here to stay,” said Vijay Balasubramaniyan, CEO of Pindrop, which identified ElevenLabs as the service used to create the fake Biden robocall.

The Federal Communications Commission (FCC) outlawing deepfake robocalls is a step in the right direction, according to Balasubramaniyan, but there’s minimal clarification on how this is going to be enforced.

OpenAI introduced watermarks to Dall-E’s images this week, both visually and embedded in a photo’s metadata.

There is some hope that technology and regulators are catching up to address this problem, but experts agree that deepfakes are only going to get worse before they get better.

The original article contains 478 words, the summary contains 138 words. Saved 71%. I’m a bot and I’m open source!

It is open source technology. There is no way to stop that, only pushing it under ground. The solution is simply a healthy skepticism. In reality it is a good thing. Now, a political image must be presented with much more transparency and conservative rhetoric lest one’s actions and message become indistinguishable from satirical meme culture.

All authoritarian promoting articles about AI regulations originate from the oligarch Altmann’s quest to monopolize the next decade of technology using populism.

AI has very real risks but no one is effectively reporting these, like the Israeli AI weapons systems and developments surrounding drones in Ukraine right now.

Images of the orange insurrectionist do not matter. No AI is crazy enough to say worse things than that jackass or anyone else in the Red Jihadi Erotic Donkey show.

Healthy skepticism isn’t a solution, because most people do not possess this.

“I don’t know why everyone is overreacting. People just need to be way smarter and be more level headed. Problem solved.”

There’s also plenty of unhealthy skepticism going around too (see COVID Pandemic).

One big problem is going to be that political supporters have been more than willing to assume anything they don’t like about their candidate is a “deep fake”, regardless of the fact that this has only been a recent possibility. You could have an authentic video of their favorite candidate telling everyone how stupid their supporters are, and those supporters will never believe it (or vice-versa, that easily-detectable fakes are made to smear a candidate, and the opposition will gobble it up).

Yeah we’re going to see a lot of disgusting stuff like fake porns, but that was already being made on still photos so of course we’re going to start seeing videos now. I think it will be interesting to see what happens in Hollywood, where actor’s voices are already being used without their consent. If laws get passed to discourage such things (and we’ve just seen the FCC ban the use of faking politician’s voices), they can also be used to curb other fakes of real people. I think that will help, but in the meantime it’s still the Wild West of AI-generated content.

People need to be more media litterate and more skeptical of news stories instead of taking them at face value, regardless of Deepfakery. So many articles that pass as “news” are filled with opinion and adjectives designed to ellicit an emotional response.

People need to learn to look at a piece of information and ask questions.

Etc. Etc. Etc.

Even a Fox News article can have some insight into the goings on if you can parse the information from the spin. Deepfakes are just going to be another level of spin, but if people are informed enough, they’ll be able to logically differentiate between a real news story and a damning fake video.

However, that doesnt solve the age old problem of willfully ignorant people and the confirmation bias…

You’re not wrong, but your suggestion is completely disconnected from reality. You cannot fix any problem in the world by appealing to the potential in people to behave intelligently and rationally. As a group, they never will. As a group, they are monkeys without tails, incapable of rational thought and behavior.

Don’t you mean ‘we’? Unless you don’t consider yourself human.

Jesus Christ, buddy.

Wait, there’s a religious spin on this?

Deepfake Revelations videos or something?

I guess you’re right. Might as well give up now, put zero effort into making anything better, and simply wallow in my own smug pessimism.

It’s worrying how much utter nonsense is published on AI and how little actually helpful advice. I hope law-makers don’t do too much damage.

Are you saying regulations would be harmful?

No, they said bs is published about ai.

Hey said “I hope lawmakers don’t do too much damage.” That’s what I was focusing on. How could lawmakers do damage to it? By attempting to regulate it. Hence my question.

Of course poor regulation can be bad, it was a silly question that was loaded. Look at, for example the 2002 tort reforms and the damage that did to public safety.

Imagine how much damage could be done to individual privacy and freedom by an ill informed legislature if they elect to regulate gradient descent.

A lot of harmful AI regulations have been proposed. Some seem designed to benefit well-heeled interest groups at the expense of society, while others seem to be pure populism. Watermarks are an especially worrying example of the latter.

Whether “regulation” in the abstract is harmful is not a sensible question to me, which is probably because my ideological outlook is different to most people here, which is probably because my cultural background is different. I’ll resist the urge to give a long, rambling explanation.

Well, I am curious to hear at least a little about your different beliefs.

I mean, this is something I was thinking about earlier this week. In general, incrementalism and capitalism and neoliberalism are all harmful. Participating in them or advocating for their expansion while knowing overall that theyre harmful concepts seems to go against my beliefs. But as I see it, we are stuck in this system. I think a big thing a lot of leftists get wrong is arguing all or nothing. “I refuse to participate because I don’t believe in this system. I only advocate for the complete destruction of this system.” It’s very, very common.

Meanwhile, more harmful laws get passed because everyone who wants better sat out. Now, that mostly applies to voting, even on direct ballot measures. So it seems counterintuitive for me, as someone who doesn’t believe in the state, to advocate for regulations—a more robust state with more oversight powers. But expanded oversight is a bitter pill. It is necessary as long as this system exists. Advocating for less, again, in my position, would seem like my path forward. But I’d argue that incrementalism is less harmful than capitalism. And if the former can put a dent in the latter to safeguard against expanded harm, then I’m for it. Even though my “-ism” would supposedly preclude me from saying so.

I think regulating AI companies is necessary. I think watermarks are a pretty small price to pay for the basic concept of objective truth/proof of it. We are barreling toward a time where photographic evidence and audio evidence are suddenly all up for debate. Avoiding that future trumps any sort of concern I have for the industry. It’s a small step in the right direction, and I don’t have the solution or anything, but I think measures like this should be put into place.

I find the idea, that anything in a modern economy could be unregulated, to be simply incoherent. Property is acquired and transferred according to law. Contracts are governed by contract law. Whenever someone goes to court, they are appealing to one of the 3 branches of government. Enforcing these decisions involves another branch of the government.

I think that has to do with culture and historical experience. On the European continent, people emphasize the break that came with the French Revolution. British people talk about the Magna Carta and pretend that civil rights are something, that somehow always existed.

US Americans also focus on discontinuities in some things; the American Revolution, Civil War, and maybe even the civil rights movement. But always the English Common Law remained an unquestioned background. It’s perhaps understandable if one forgets that the Common Law is law, and the judicial system is government.

If you look at the German experiences, it becomes impossible to ignore that everything can be different. The end of the Holy Roman Empire after the French Revolution, the Wars of Liberation, the codification of german civil law, the Unification, the birth and end of the Weimar Republic, Nazi Terror, the two German states, capitalist and socialist.

The only constant is that society continues on. It never collapses, no matter what the strain. People are not naturally selfish. When push comes to shove, they do their duty, which is not, as such, a good thing.

So, it’s no surprise that Germany came up with Ordoliberalism. It emphasizes the role of government as creating the framework in which businesses exist. The government directly creates some markets and heavily influences others (eg illegal drug markets). Mind that there are a many specific policies associated with this ideology, that don’t result from this insight.

Of course, views that see government and its laws as the foundation of markets/markets as a tool wielded for the public benefit are hardly alien to the USA. It’s implicit in the copyright clause of the US Constitution.

I’m now all out of steam. I remind you that law-makers do not have the option of marking all AI generated content.

Most harmful what AI could do is already illegal. So most new regulations who add stricter rules, will unavoidable be damaging one way or the other. For example, if we regulate too much, we leave all the progress to mega corps. Or we punish curious people too much or shift issues around instead of solving them. It’s going to get wild, for sure. My2Cents

Me again, re:watermarks.

Frauds, liars and even pranksters will not watermark their content, or remove watermarks. Best you can do is get genAI services to implement one, which they already do. It’s an insignificant business expense.

So there is a situation where most genAI content is marked, except that content which you actually want to identify. The result of watermarks must be to make fraudulent content more credible. It makes the situation worse.

My biggest worry is that we reach a situation similar to the war on drugs, where unthinking moral panic causes society to double down on harmful “solutions”. You have to think about how this could possibly be enforced and against whom.

GenAI models are about the size of movie. That is to say that they can be torrented just as easily. Stopping people from sharing non-watermarked generators would require an unprecedented level of internet surveillance. The people caught would, IMHO, be the same kind of people caught torrenting movies; mainly kids. The fraudsters can be prosecuted for fraud, anyway, if you catch them. A seriously enforced watermarking law would, IMHO, only prosecute kids and other, basically, harmless people (though they may be using genAI to bully and harass their peers).

Training AI models is not as expensive as one may think. The expensive part is the custom-made training data, as well as the research; the trial and error. Even something as massive as ChatGPT could be trained for less than $5 million. An image generator can probably be trained for less than $100k. In light of that report that someone defrauded a company for $25 million, that’s a cheap investment; maybe something you could monetize on the dark net. You’d have to successfully crack down on the dark net in unprecedented ways.

You’d need close monitoring of anything happening with cloud computing. You’d need to require licenses for high-end GPUs.

The problem isn’t that new in principle. I remember police advice to hang up and call back under a known number, if someone identified as a police office on the phone. I also remember the 1964 move Fail Safe; a Cold War classic. A squadron of US bombers is accidentally sent on a nuclear raid against Moscow. IDK if the depiction of military practice is in any way accurate. The bombers pass the fail-safe point, after which they can no longer be recalled. In an attempt to stop them, they are radioed by the president and even their wives. They ignore it as trained, because it might be a soviet trick, imitating the voices. So, IDK if bomber crews were really ever trained to expect voice imitators, but even in the early 60ies it must have seemed sufficiently credible to movie audiences.

No one saw this coming /s

I’ll be honest, I assumed the anti-deepfake tech would have been quicker on the draw.

I’m shocked you assumed this. Most people develop what makes the money. I’m not even sure who’s trying to develop anti deepfake tech.

As that firm that got conned out of $25 million learned, there’s plenty of monetary incentive to have a warning system for spotting deep fakes.

I’m a little surprised we haven’t seen licensed deep fake pornography.

Like from an actor that doesn’t get numbers acting anymore but has (or had) sex appeal (in their prime).

Pam Anderson?

Maybe it’s because of copyright/consent for the other dataset.

In a perfect world we’d collectively agree the research and developmemt of AI is too consequencential on our society and agree to stop researching it. We don’t live in that world sadly

Only a luddite would do that. All new tech can be used for good and evil, but that’s not a good argument against innovation. Quality of life has gotten steadily better as technology got better, not worse. Unless you think AI is different for a good reason, such as the possibility of paperclip maximisers. But that doesn’t seem to be what you’re saying.