from blue_berry@lemmy.world to technology@lemmy.world on 29 May 2025 05:30

https://lemmy.world/post/30426640

Von Neumann’s idea of self-replicating automata describes machines that can reproduce themselves given a blueprint and a suitable environment. I’m exploring a concept that tries to apply this idea to AI in a modern context:

- AI agents (or “fungus nodes”) that run on federated servers

- They communicate via ActivityPub (used in Mastodon and the Fediverse)

- Each node can train models locally, then merge or share models with others

- Knowledge and behavior are stored in RDF graphs + code (acting like a blueprint)

- Agents evolve via co-training and mutation, they can switch learning groups and also chose to defederate different parts of the network

This creates something like a digital ecosystem of AI agents, growing across the social web; with nodes being able to freely train their models, which indirectly results in shared models moving across the network in comparison to siloed models of current federated learning.

My question: Is this kind of architecture - blending self-replicating AI agents, federated learning, and social protocols like ActivityPub - feasible and scalable in practice? Or are there fundamental barriers (technical, theoretical, or social) that would limit it?

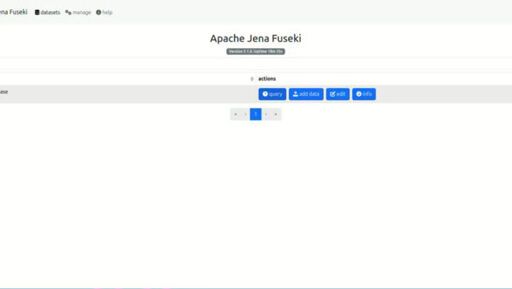

I started to realize this using an architecture with four micro-services for each node (frontend, backend, knowledge graph using fuseki jena and activitypub-communicator); however, it brings my local laptop to its limits even with 8 nodes.

The question could also be stated differently: how much compute would be necessary to trigger non trivial behaviours that can generate some value to sustain the overall system?

threaded - newest

Your bottleneck will probably be communication speed if you want any kind of performance in a reasonable time. Small nodes will have to communicate a lot of little data a lot, big nodes will have to communicate bigger data even if it’s more refined.

Yeah thats a good point. Also given that nodes could be fairly far apart from one another, this could become a serious problem.

I love how casually you just dropped this 😂.

I’m working on something similar but it’s not ready for it’s FOSS release yet.

Feel free to message me if you wanna discuss ideas.

Cool. Well, the feedback until now was rather lukewarm. But that’s fine, I’m now going more in a P2P-direction. It would be cool to have a way for everybody to participate in the training of big AI models in case HuggingFace enshittifies

Train them to do what?

Currently the nodes only recommend music (and are not really good at it tbh). But theoretically, it could be all kinds of machine learning problems (then again, there is the issue with scaling and quality of the training results).

I think everyone thought of what you described, but most lacked mind discipline to think in specifics.

That’s all I wanted to say, very cool, good luck.

(I personally would prefer thinking of a distributed computer (tasks distributed among members of a group with some redundancy and a graph of execution dependencies, results merged, so the initial state a node retrieves only once), but I lack knowledge of fundamentals.)

Thanks :)